The Challenge

Low Adoption and Limited Insight

A key Cisco licensing platform faced low adoption and limited knowledge of its current user experience. The platform lacked a cohesive vision, making this both a challenge and an opportunity for research.

Why it Mattered

• Low adoption was impacting critical business metrics.

• Frustrated customers were turning to alterative solutions.

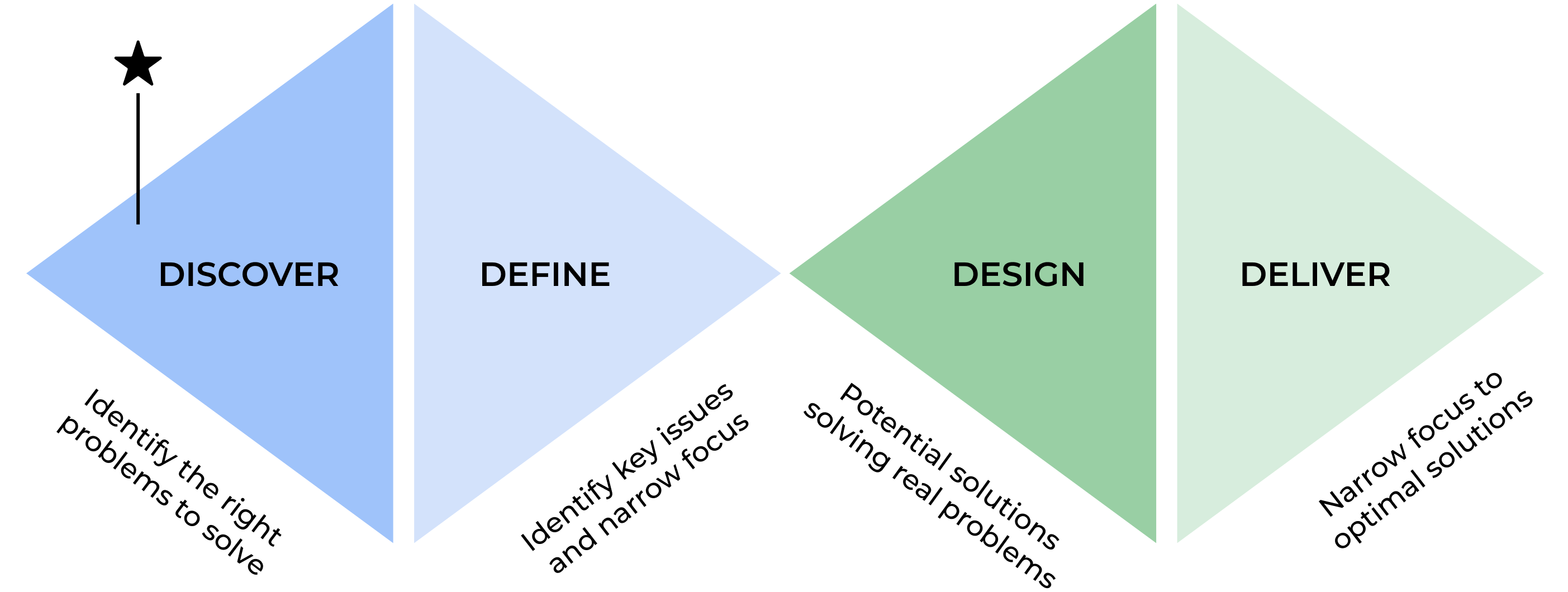

• Leadership recognized the need for a user-centered approach to improve the platform experience.